I Built an AI Assistant for Our Platform. Then My Own Team Started Using It More Than I Expected.

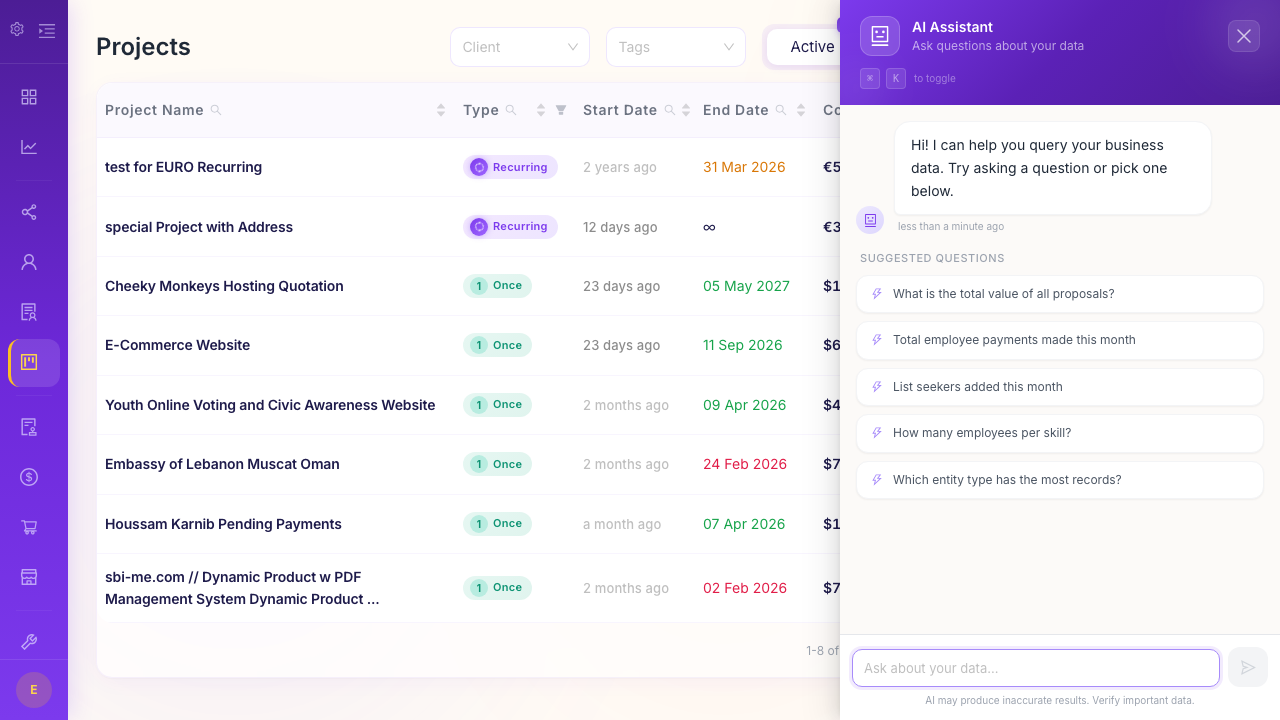

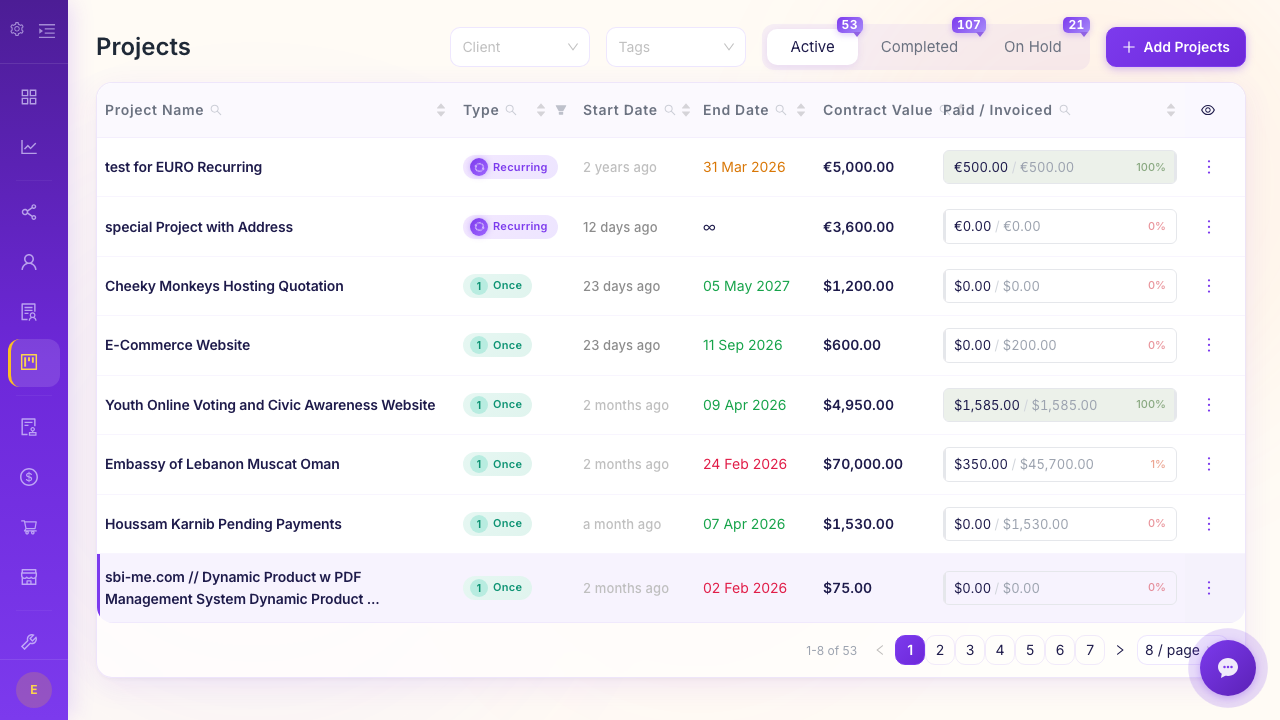

I'm the engineering lead at Belvak, and about eight months ago we shipped an AI chatbot inside our platform. The idea was straightforward: let users ask questions about their business data in plain English instead of clicking through dashboards and filters. "How many active projects do we have?" "Which invoices are overdue?" That kind of thing.

I expected it to be a nice-to-have. A feature that looks great in demos but maybe gets used once a week. I was wrong. It's now one of the most-used features in the product, and honestly, our own internal team uses it daily. Which is funny, because we built the dashboards. We know where everything is. We still prefer asking the chatbot.

The Reporting Problem That Won't Die

Every service company I've talked to has the same complaint about reporting. The data exists. It's in the system. But getting a specific answer to a specific question requires either a pre-built report (which only covers the questions someone anticipated) or manual work (export, filter, calculate, share).

Here's a real example. Our ops manager, Karim, wanted to know which clients had both active projects AND overdue invoices. Not complicated in concept. In practice, he'd need to go to the projects page, filter by active status, note the client names, go to the invoices page, filter by overdue, cross-reference the two lists. Maybe five minutes of clicking and scanning. Not terrible, but annoying enough that he often just... didn't bother. He'd handle things reactively instead of proactively.

Now he types "which clients have active projects and overdue invoices?" and gets the answer in about three seconds. He does this almost every morning. The data was always there - the friction of accessing it was the problem.

Why We Chose Groq (and the Multi-Model Rotation)

I want to get slightly technical here because I think the engineering decisions matter for anyone thinking about adding AI to their own products.

We use Groq for inference. Not OpenAI, not Anthropic (for the chatbot - obviously different story for other things). The reason is speed. Groq runs models on custom LPU hardware that's optimized for inference, and the response times are noticeably faster than other providers we tested. When someone asks their business software a question, they want the answer in 1-2 seconds, not 5-8. That delay matters for adoption - if it feels slow, people go back to clicking through dashboards.

The more interesting decision was multi-model rotation. We have five models configured, and the system rotates between them with automatic failover. If Model A is having a slow day or returns an error, the request automatically goes to Model B. Users never see a failure.

Why five models? Because we learned the hard way that no single model endpoint has 100% uptime. API rate limits, capacity issues, model updates that temporarily degrade performance - these things happen. With rotation, our effective uptime has been over 99.9% since launch, even though individual model endpoints have had multiple incidents during that period.

How the Chatbot Actually Works (Without Magic)

I want to demystify this because "AI-powered business intelligence" sounds like marketing fluff.

Our chatbot works by understanding the database schema - it knows what tables exist, what columns they have, and how they relate to each other. When you ask "how many projects are active?", it knows that projects live in the projects table and that there's a status column. It translates your question into a database query, runs it, and returns the result in natural language.

The schema awareness is the important part. We wrote a module that auto-generates a description of our database structure - 19 core tables, their columns, their relationships - and includes it in every prompt to the LLM. This gets cached for an hour so we're not regenerating it on every request. The LLM doesn't need to be trained on your specific data. It just needs a map.

There are boundaries. The chatbot can read data but can't modify anything - no accidentally deleting projects because you phrased something ambiguously. It also respects the permission system. If you don't have access to financial data through the UI, the chatbot won't show it to you either. That was non-negotiable for us.

The Moment I Knew It Was Working

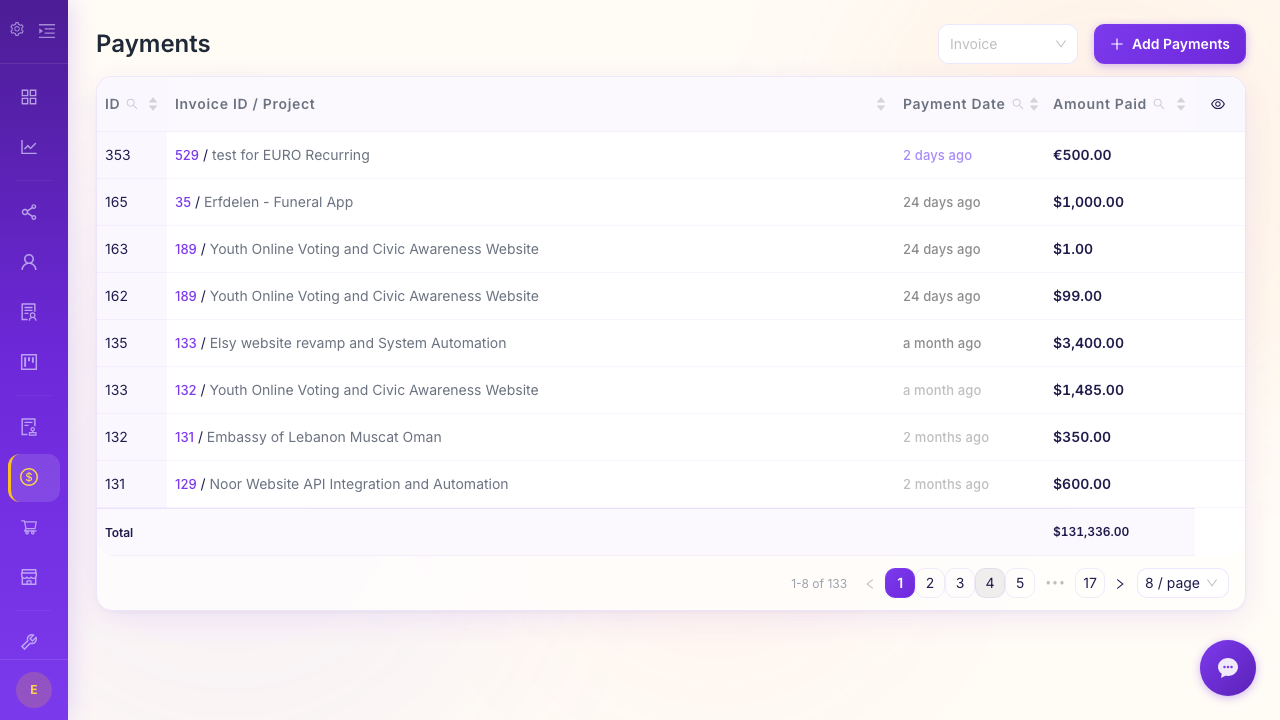

About two weeks after launch, I was in a meeting with Nadia (our founder) and a couple of others. We were discussing whether to expand into a new service area and someone asked, "what percentage of our revenue comes from maintenance contracts versus project work?"

Nadia pulled out her phone, opened the chatbot, typed the question, and had the answer before anyone could open a spreadsheet. 34% maintenance, 66% project work. The conversation moved forward immediately.

That's when it clicked for me. The value isn't in answering hard questions. It's in answering easy questions instantly. The questions that everyone knows the answer exists for, but nobody wants to spend five minutes pulling it up. When every small question gets answered in real time, meetings get shorter, decisions get faster, and people stop saying "let me get back to you on that."

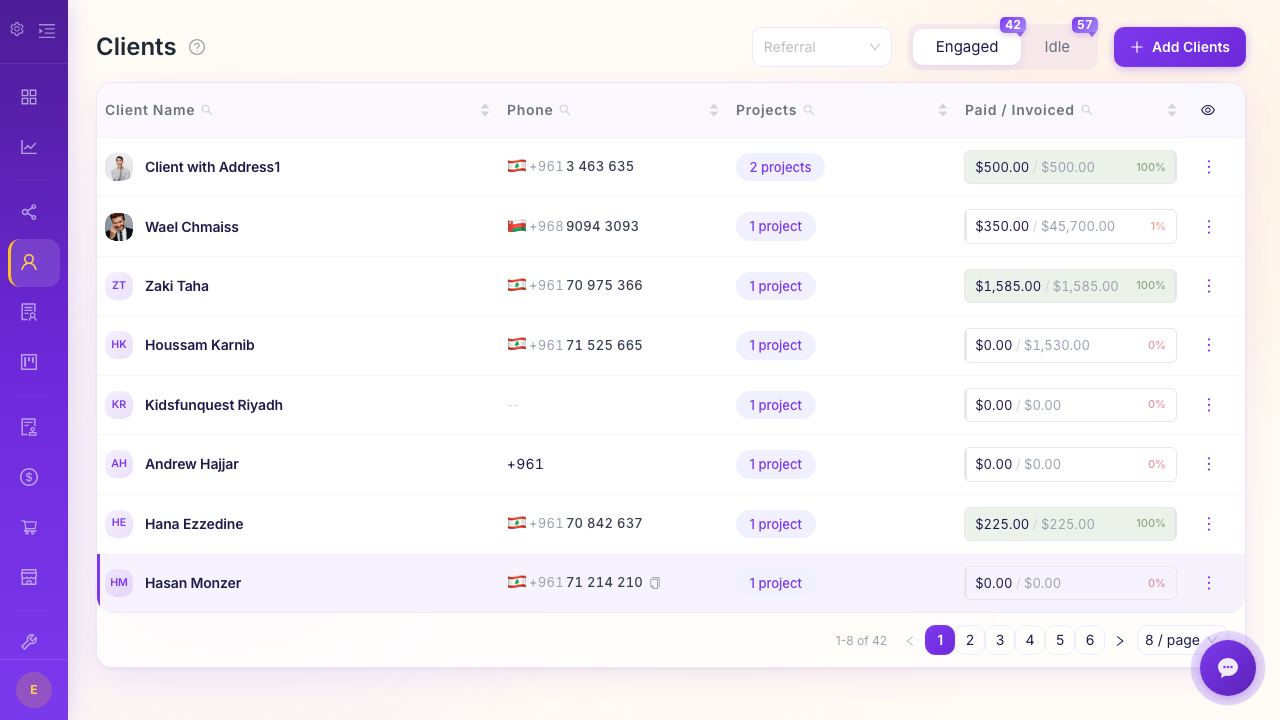

Our customer success lead, Rima, told me she uses it before every client check-in call. She types "show me [client name] project status, outstanding invoices, and recent payments" and gets a briefing in seconds. She used to prep for those calls by opening three different screens and taking notes. Not anymore.

What It's Bad At (Honesty Matters)

I want to be straight about the limitations because overpromising on AI is a disease in this industry.

Ambiguous questions get ambiguous answers. If you ask "how are we doing?" the chatbot will take a guess at what you mean, and it might guess wrong. Be specific. "What's our total invoiced amount for Q1 2026?" works great. "How's the business?" does not.

Complex multi-step analysis is still better done by a human with a spreadsheet or BI tool. The chatbot can tell you your top 5 clients by revenue. It can tell you your average project duration. But asking it to build a profitability model that accounts for employee costs, overhead allocation, and payment timing? That's not what it's for.

It occasionally gets calculations slightly wrong on edge cases - things like date boundaries, or how to handle partially paid invoices in a revenue calculation. We've been tightening this up continuously, but I'd always sanity-check a number before putting it in a board presentation. Use it for operational questions, not for your annual financial statements.

The Rate Limiting Decision

We rate-limit the chatbot to 10 requests per minute per user. Some people thought that was too low. But running LLM queries costs real money, and we didn't want a situation where one power user's automation script racks up a $500 API bill in an afternoon.

10 per minute is more than enough for human usage. Nobody is manually typing more than 10 questions in 60 seconds. If you're hitting the limit, you're probably doing something programmatic, and we'd rather have that conversation than silently eat the cost.

What This Means for Service Companies

The bigger picture here is that business intelligence is becoming conversational. Not just for big companies with data teams and Tableau licenses. For 15-person agencies that just want to know how their month is going.

I've watched our users go from "I should check the dashboard more often" to "let me just ask." The data consumption went up dramatically - not because we added more data, but because we removed the friction of accessing it. Same data, different interface, completely different usage pattern.

The folks who get the most value aren't the technical ones. It's the account managers, the ops people, the founders - people who need answers but don't want to build reports. They just want to ask a question and move on with their day.

That's what we built. Not a replacement for proper analytics. A way to get quick answers to real questions without leaving what you're doing. Turns out that's what most people needed all along.